Why prefer declaring variables using auto? Let us count some of the reasons why…

Problem

JG Question

1. In the following code, what actual or potential pitfalls exist in each labeled piece of code? Which of these pitfalls would using auto variable declarations fix, and why or why not?

// (a)

void traverser( const vector<int>& v ) {

for( vector<int>::iterator i = begin(v); i != end(v); i += 2 )

// ...

}

// (b)

vector<int> v1(5);

vector<int> v2 = 5;

// (c)

gadget get_gadget();

// ...

widget w = get_gadget();

// (d)

function<void(vector<int>)> get_size

= [](const vector<int>& x) { return x.size(); };

Guru Question

2. Same question, subtler examples: In the following code, what actual or potential pitfalls exist in each labeled piece of code? Which of these pitfalls would using auto variable declarations fix, and why or why not?

// (a)

widget w;

// (b)

vector<string> v;

int size = v.size();

// (c) x and y are of some built-in integral type

int total = x + y;

// (d) x and y are of some built-in integral type

int diff = x - y;

if(diff < 0) { /*...*/ }

// (e)

int i = f(1,2,3) * 42.0;

Solution

As you worked through these cases, perhaps you noticed a pattern: The cases are mostly very different, but what they have in common is that they illustrate reason after reason motivating why (and how) to use auto to declare variables. Let’s dig in and see.

1. In the following code, what actual or potential pitfalls exist, which would using auto variable declarations fix, and why or why not?

(a) will not compile

// (a)

void traverser( const vector<int>& v ) {

for( vector<int>::iterator i = begin(v); i != end(v); i += 2 )

// ...

}

With (a), the most important pitfall is that the code doesn’t compile. Because v is const, you need a const_iterator. The old-school way to fix this is to write const_iterator:

vector<int>::const_iterator i = begin(v) // ok + requires thinking

However, that requires thinking to remember, “ah, v is a reference to const, I better remember to write const_ in front of its iterator type… and take it off again if I ever change v to be a reference to non-const… and also change the “vector” part of i‘s type if v is some other container type…”

Not that thinking is a bad thing, mind you, but this is really just a tax on your time when the simplest and clearest thing to write is auto:

auto i = begin(v) // ok, best

Using auto is not only correct and clear and simpler, but it stays correct if we change the type of the parameter to be non-const or pass some other type of container, such as if we make traverser into a template in the future.

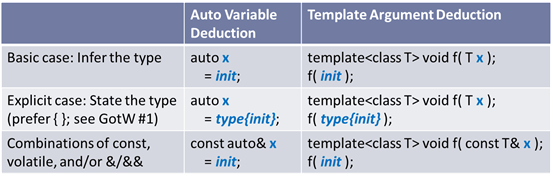

Guideline: Prefer to declare local variables using auto x = expr; when you don’t need to explicitly commit to a type. It is simpler, guarantees that you will use the correct type, and guarantees that the type stays correct under maintenance.

Although our focus is on the variable declaration, there’s another independent bug in the code: The += 2 increment can zoom you off the end of the container. When writing a strided loop, check your iterator increment against end on each increment (best to write it once as a checked_next(i,end) helper that does it for you), or use an indexed loop something like for( auto i = 0L; i < v.size(); i += 2 ) which is more natural to write correctly.

(b) and (c) rely on implicit conversions

// (b)

vector<int> v1(5); // 1

vector<int> v2 = 5; // 2

Line 1 performs an explicit conversion and so can call vector‘s explicit constructor that takes an initial size.

Line 2 doesn’t compile because its syntax won’t call an explicit constructor. As we saw in GotW #1, it really means “convert 5 to a temporary vector<int>, then move-construct v2 from that,” so line 2 only works for types where the conversion is not explicit.

Some people view the asymmetry between 1 and 2 as a pitfall, at least conceptually, for several reasons: First, the syntaxes are not quite the same and so learning when to use each can seem like finicky detail. Second, some people like line 2’s syntax better but have to switch to line 1 to get access to explicit constructors. Finally, with this syntax, it’s easy to forget the (5) or = 5 initializer, and then we’re into case 2(a), which we’ll get to in a moment.

If we use auto, we have a single syntax that is always obviously explicit:

auto v2 = vector<int>(5);

Next, case (c) is similar to (b):

// (c)

gadget get_gadget();

// ...

widget w = get_gadget();

This works, assuming that gadget is implicitly convertible to widget, but creates a temporary object. That’s a potential performance pitfall, as the creation of the temporary object is not at all obvious from reading the call site alone in a code review. If we can use a gadget just as well as a widget in this calling code and so don’t explicitly need to commit to the widget type, we could write the following which guarantees there is no implicit conversion because auto always deduces the basic type exactly:

// better, if you don't need an explicit type

auto w = get_gadget();

Guideline: Prefer to declare local variables using auto x = expr; when you don’t need to explicitly commit to a type. It is efficient by default and guarantees that no implicit conversions or temporary objects will occur.

By the way, if you’ve been wondering whether that “=” in auto x = expr; causes a temporary object plus a move or copy, wonder no longer: No, it constructs x directly. (See GotW #1.)

Now, what if we said widget here because we know about the conversion and really do want to deal with a widget? Then writing auto is still more self-documenting:

// better, if you do need to commit to an explicit type

auto w = widget{ get_gadget() };

Guideline: Consider declaring local variables auto x = type{ expr }; when you do want to explicitly commit to a type. It is self-documenting to show that the code is explicitly requesting a conversion.

Note that this last version technically requires a move operation, but compilers are explicitly allowed to elide that and construct w directly—and compilers routinely do that, so there is no performance penalty in practice.

(d) creates an indirection, and commits to a single type

// (d)

function<void(vector<int>)> get_size

= [](const vector<int>& x) { return x.size(); };

Case (d) has two problems, and auto can help with both of them. (Bonus points if you noticed that a form of “auto” is actually already helping in a third way.)

First, the lambda object is converted to a function<>. That can be appropriate when passing or returning the lambda to a function, but it costs an indirection because function<> has to erase the actual type and create a wrapper around its target to hold it and invoke it. In this case, we appear to be using the lambda locally, and so the correct default way to capture it is using auto, which binds to the exact (compiler-generated and otherwise-unutterable-by-you) type of the lambda and so doesn’t incur an indirection:

// partly improved

auto get_size = [](const vector<int>& x) { return x.size(); };

Guideline: Prefer to use auto name = to name a lambda function object. Use std::function</*…*/> name = only when you need to rebind it to another target or pass it to another function that needs a std::function<>.

Second, the lambda commits to a specific argument type—it only works with vector<int>, and not with vector<double> or set<string> or anything else that is also able to report a .size(). The way to fix that is to write another auto:

// best

auto get_size = [](const auto& x) { return x.size(); };

// yes, you could use this "too cute" variation for slightly less typing

// [](auto&& x) { return x.size(); };

// but you'll also get less const-enforcement and that isn't a good deal

This still creates just a single object, but with a templated function call operator so that it can be invoked with different types of arguments, and so will work with any type of container that supports calling .size()…

Guideline: Prefer to use auto lambda parameter types. They are just as efficient as explicit parameter types, and allow you to call the same lambda with different argument types.

… and did you notice the “third auto” that was there all along? Even in the original example, we’ve been implicitly using automatic type deduction in a third place by allowing the lambda to deduce its return type, and so now with the fully generic “best” version of the code that return type will always be exactly whatever .size() returns for whatever kind of object we’re calling .size() on, which can be different for different argument types. All in all, that’s pretty nifty.

Guideline: Prefer to use implicit return type deduction for lambda functions.

2. Same question, subtler examples: In the following code, what actual or potential pitfalls exist, which would using auto variable declarations fix, and why or why not?

(a) might leave the variable uninitialized.

// (a)

widget w;

This creates an object of type widget. However, we can’t tell just looking at this line whether it’s initialized or contains garbage values. As noted in GotW #1, if widget is a built-in type or aggregate type, its members won’t get initialized. Uninitialized variables should be avoided by default, and only used deliberately in cases where you really want to start with an uninitialized memory region for performance reasons—notably when you have a large object, such as an array, that is expensive to zero-initialize and is immediately going to be overwritten anyway, such as if it’s being used as an “out” parameter.

Guideline: Always initialize variables, except only when you can prove garbage values are okay, typically because you will immediately overwrite the contents.

Would auto help here? Indeed it would:

auto w = widget{}; // guaranteed to be initialized

One of the key benefits of declaring a local variable using auto is that the “=” is required—there’s no way to declare the variable without setting an initial value. Further, this is explicit and clear just from reading the above variable declaration on its own during a code review, without having to go inquire in the type’s header about the exact details of the type and poll the neighborhood for character references who will swear it’s not now, and is even under maintenance never likely to become, an aggregate.

Guideline: Prefer to declare local variables using auto. It guarantees that you cannot accidentally leave the variable uninitialized.

(b) might perform a silent narrowing conversion.

// (b)

vector<string> v;

int size = v.size();

This will compile, run, and sometimes lose information because it uses an implicit narrowing conversion. Not the safest route to a happy weekend when the bug report from the field comes in on Friday night—normally from a large and important customer, because the bug will be exercised only with larger data sizes.

Here’s why: The return type of vector<string>::size() is vector<string>::size_type, but what’s that? It depends on your implementation, because the standard leaves it implementation-defined. But one thing I guarantee you is that “it ain’t no int“—for at least two reasons, which lead to at least two ways this can lose information by silent narrowing:

- Sign:

size_type is required to be an unsigned integer value, so this code is asking to convert it to a signed value. That’s bad enough even if sizeof(size_type) == sizeof(int) and it throws away the high bit—and with it the upper half of the representable values—to make room for the sign bit. It’s worse than that if sizeof(size_type) > sizeof(int), which brings us to the second problem, because that’s actually likely…

- Size:

size_type basically needs to be the same size as a pointer, since it may have to represent any offset in a vector<char> that is larger than half the machine’s address space. In 64-bit code, 64-bit pointers mean 64-bit size_types. However, if on the same system an int is still 32 bits for compatibility (and this is common), then size_type is bigger than int, and converting to int throws away not just the high-order bit, but over half of the bits and the vast majority of the representable values.

Of course, you won’t notice on small vectors as long as .size() < 2(CHAR_BITS*sizeof(int)-1). That doesn’t mean it’s not a bug; it just means it’s a latent bug.

Does auto help? Yes indeed:

auto size = v.size(); // exact type, guaranteed no narrowing

Guideline: Prefer to declare local variables using auto. It guarantees that you get the exact type and cannot accidentally get narrowing conversions.

(c), (d), and (e) have potential narrowing and signedness issues.

// (c) x and y are of some built-in integral type

int total = x + y;

In case (c), we might also have a narrowing conversion. The simplest way to see this is that if either x or y is larger than int, which is what we’re trying to store the result into, then we’ve definitely got a silent narrowing conversion here, with the same issues as already described in (b). And even if x and y are ints today, if under maintenance the type of one later changes to something like long or size_t, the code silently becomes lossy—and possibly only on some platforms, if it changes to long and that’s the same size as int on some platforms you target but larger than int on others.

Note that, even if you know the exact types of x and y, you will get different types for x+y on different platforms, particularly if one is signed and one is unsigned. If both x and y are signed, or both are unsigned, and one’s type has more bits than the other, that’s the type of the result. If one is signed and the other is unsigned then other rules kick in, and the size and signedness of the result can vary on different platforms depending on the relative actual sizes and the signedness of x and y on that platform. (This is one of the consequences of C and C++ not standardizing the sizes of the built-in types; for example, we know a long is guaranteed to be at least as big as an int, but we don’t know how many bits each is, and the answer varies by compiler and platform.)

Does auto help here? Almost always “yes,” but in one case “yes with a little help you really want to reach for anyway.”

By default, write for correctness, clarity, and portability first: To avoid lossy narrowing conversions, auto is your portability pal and you should use it by default. Writing auto is much better than writing it out by hand as std::common_type< decltype(x), decltype(y) >.

auto total = x + y; // exact type, guaranteed no narrowing

Guideline: Prefer to declare local variables using auto. It guarantees that you get the exact type and so is the simplest way to portably spell the implementation-specific type of arithmetic operations on built-in types, which vary by platform, and ensure that you cannot accidentally get narrowing conversions when storing the result.

However, what if in rare cases this code may be in a tight loop where performance matters, and auto may select a wider type than you know you need to store all possible values? For example, in some cases performing arithmetic using uint64_t instead of uint32_t could be twice as slow. If you first prove that this actually matters using hard profiler data, and then further prove by performing other validation that you won’t (or won’t care if you do) encounter results that would lose value by narrowing, then go ahead and commit to an explicit type—but prefer to do it using the following style:

// rare cases: use auto + <cstdint> type

auto total = uint_fast64_t{ x+y }; // total is an unsigned 64-bit value

// ^ see note [1]

// or use auto + size-preserving signed/unsigned helper [2]

auto total = as_unsigned( x+y ); // total is unsigned and size of x+y

Case (d) is similar:

// (d) x and y are of some built-in integral type

int diff = x - y;

if(diff < 0) { /*...*/ }

This time, we’re doing a subtraction. No matter whether x and y are signed or not, putting the answer in a signed variable like this is the right thing to do—the result could be negative, after all.

However, we have two issues. The first, again, is that int may not be big enough to avoid truncating the result, so we might lose information if x – y produces something larger than an int. Using auto can help with that.

The second is that x – y might give a strange answer, which isn’t the programmer’s fault but is something you want to remember about arithmetic in C and C++. Consider this code:

unsigned long x = 42;

signed short y = 43;

auto diff = x - y; // one actual result: 18446744073709551615

if(diff < 0) { /*...*/ } // um, oops – branch won't be taken

“Wait, what?” you ask. On nearly all platforms, an unsigned long is bigger than a signed short, and because of the promotion rules the type of s – u, and therefore of result, will be… unsigned long. Which is, well, not very signed. So depending on the types of x and y, and depending on your actual platform, it may be that the branch won’t be taken, which clearly isn’t the same as the original code.

Guideline: Combine signed and unsigned arithmetic carefully.

Before you say, “then I always want signed!” remember that if you overflow then unsigned arithmetic wraps, which can be valid for your use, whereas signed arithmetic has undefined behavior, which is quite unlikely to be useful. Sometimes you really need signed, and sometimes you really need unsigned, even though often you won’t care.

From observing auto‘s effect in case (d), it might seem like auto has helped one problem… but was it at the expense of creating another?

Yes, on the one hand, auto did indeed help us: Using auto ensured we could write portable and correct code where the result wasn’t needlessly narrowed. If we didn’t care about signedness, which is often true, that’s quite sufficient.

On the other hand, using auto might not preserve signedness in a computation like x – y that’s supposed to return something with a sign, or it might not preserve unsignedness when that’s desirable. But this isn’t so much an issue with auto itself as that we have to be careful when combining signed and unsigned arithmetic, and by binding to an exact type auto is exposing this issue with some code that might potentially be already nonportable, or have corner cases the developer wasn’t aware of when he wrote it.

So what’s a good answer? Consider using auto together with the as_signed or as_unsigned conversion helper we saw before, which is used in lieu of a cast to a specific type; the helper is written out more fully in the endnotes. [2] Then we get the best of both worlds—we don’t commit to an explicit type, but we ensure the basic size and signedness in portable code that will work as intended on many different compilers and platforms.

Guideline: Prefer to use auto x = as_signed(integer_expr); or auto x = as_unsigned(integer_expr); to store the result of an integer computation that should be signed or unsigned. Using auto together with as_signed or as_unsigned makes code more portable: the variable will both be large enough and preserve the required signedness on all platforms. (Signed/unsigned conversions within integer_expr may still occur.)

Finally, case (e) brings floating point into the picture:

// (e)

int i = f(1,2,3) * 42.0;

Here we have our by-now-yawnworthy-typical narrowing—and an easy case because it isn’t even hiding, it’s saying int and 42.0 right there in the same breath, which is narrowing almost regardless of what type f returns.

Does auto help? Yes, in making our code self-documenting and more reviewable, as we noted before. If we follow the auto x = type{expr}; declaration style, we would be (happily) forced to write the conversion explicitly, and when we initially use { } we get an error that in fact it’s a narrowing conversion, which we acknowledge (again explicitly) by switching to ( ):

auto i = int( f(1,2,3) * 42.0 );

This code is now free of implicit conversions, including implicit narrowing conversions. If our team’s coding style says to use auto x = expr; or auto x = type{expr}; wherever possible, then in a code review just seeing the ( ) parens can immediately connote explicit narrowing; adding a comment doesn’t hurt either.

But for floating point calculations, can using auto by itself hurt? Consider this example, contributed by Andrei Alexandrescu:

float f1 = /*...*/, f2 = /*...*/;

auto f3 = f1 + f2; // correct, but on some compilers/platforms...

double f4 = f1 + f2; // ... this might keep more bits of precision

As Alexandrescu notes: “Machines are free to do intermediate calculations in a larger precision than the target, and in many cases (and traditionally in C) calculations are done in double precision. So for f3 we have a sum done in double precision, which is then truncated down to float. For f4, the sum is preserved at full precision.”

Does this mean using auto creates a potential flaw here? Not really. In the language, the type of f1 + f2 is still float, and the naked auto maintains that exact type for us. However, if we do want to follow the pattern of switching to double early in a complex computation, we can and should say so:

float f1 = /*...*/, f2 = /*...*/;

auto f5 = double{f1} + f2;

Summary

We’ve seen a number of reasons to prefer to declare variables using auto, optionally with an explicit type if you do want to commit to a specific type.

If you’re observed a pattern in this GotW’s Guidelines, you’ll already have a sense of what’s coming in GotW #94… a Special Edition on, you guessed it, auto style.

Notes

[1] Another reason to prefer using the <cstdint> typedef names is because, due to a quirk in the C++ language grammar, only a single-word type is allowed where uint64_t appears in this example. That’s fine nearly always because it’s all you need for class types and all typedef and using alias names and most built-in types, but you can’t directly name arrays or the multi-word built-in types like unsigned int or long long in that position; for the latter, use the uintNN_t-style typedef names instead. The exact ones, such as uint64_t, are “optional” in the standard, but they are in the standard and expected to be widely implemented so I used them. The “least” and “fast” ones are required, so if you don’t have uint64_t you can use uint_least64_t or uint_fast64_t.

[2] The helpers preserve the size of the type while changing only the signedness. Thanks to Andrei Alexandrescu for this basic idea; any errors are mine, not his. The C++98 way is to provide a set of overloads for each type, but a modern version might look something like the following which uses the C++11 std::make_signed/make_unsigned facilities.

// C++11 version

//

template<class T>

typename make_signed<T>::type as_signed(T t)

{ return make_signed<T>::type(t); }

template<class T>

typename make_unsigned<T>::type as_unsigned(T t)

{ return make_unsigned<T>::type(t); }

Note that with C++14 this gets even sweeter, using auto return type deduction to eliminate typename and repetition, and the _t alias to replace ::type:

// C++14 version, option 1

//

template<class T> auto as_signed (T t){ return make_signed_t <T>(t); }

template<class T> auto as_unsigned(T t){ return make_unsigned_t<T>(t); }

or you can equivalently write these function templates as named lambdas:

// C++14 version, option 2

//

auto as_signed =[](auto x){ return make_signed_t <decltype(x)>(x); };

auto as_unsigned =[](auto x){ return make_unsigned_t<decltype(x)>(x); };

Sweet, isn’t it? Once you have a compiler that supports these features, pick whichever suits your fancy.

Acknowledgments

Thanks in particular to Scott Meyers and Andrei Alexandrescu for their time and insights in reviewing and discussing drafts of this material. Thanks also to the following for their feedback to improve this article: mttpd, Jim Park, Yuri Khan, Arne, rhalbersma, Tom, Martin Ba, John, Frederic Dumont, Sebastian.

The session schedule for this week’s //build/ conference in San Francisco has now been posted.

The session schedule for this week’s //build/ conference in San Francisco has now been posted.