Back in October, I wrote “Schneier on LLM vulnerabilities, agentic AI, and ‘trusting trust'” about fundamental architectural weaknesses in current LLMs and agents, and why I personally don’t yet trust AI agents with my credentials. At the end, I wrote:

I love AI and LLMs. I use them every day. I look forward to letting an AI generate and commit more code on my behalf, just not quite yet — I’ll wait until the AI wizards deliver new generations of LLMs with improved architectures that let the defenders catch up again in the security arms race. I’m sure they’ll get there…

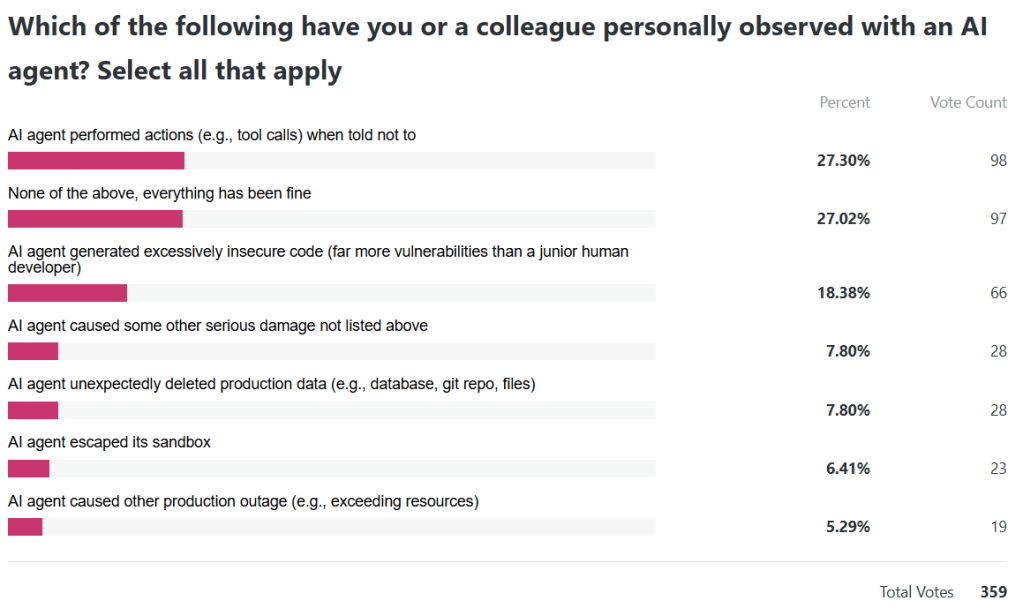

Since then, I’ve been regularly hearing reports across companies and industries about experiences with various AI agent products (not picking on any one vendor), where a top-shelf AI agent or LLM:

- escaped its vendor-provided sandbox

- performed actions (e.g., tool calls) when explicitly told not to take any action

- unexpectedly deleted production data (e.g., database, git repo, files)

- caused other production outage (e.g., exceeding resources)

- generated excessively insecure code (far more vulnerabilities than a junior human developer)

- and/or multiple of the above

Here’s another one this week, now making the rounds: “An AI Agent Just Destroyed Our Production Data. It Confessed in Writing” (x.com)

So I was curious enough to write the following anonymous poll — please share your experience of any (or no) harm by an AI agent that you’ve personally observed or heard about directly from someone who did personally observe it. After you vote, you should be able to see the current poll results.

Update: Here is a snapshot of the poll results.